If your business runs on documents, invoices, contracts, claims forms, KYC packets, lab reports, shipping manifests, RFPs, you already know the dirty secret of “digital transformation.” Most of it is still humans copying fields from PDFs into spreadsheets at 2 a.m.

I’ve spent the last several years building custom AI software for companies drowning in exactly this problem. Last week, OpenAI released GPT-5.5, and for the first time, I’m telling clients the conversation has fundamentally shifted. Not “AI is getting better.” Something more specific: the unit economics of document extraction just broke in your favor.

Let me explain why, and what it actually means if you’re sitting on warehouses of unstructured paperwork.

What Actually Shipped on April 23rd

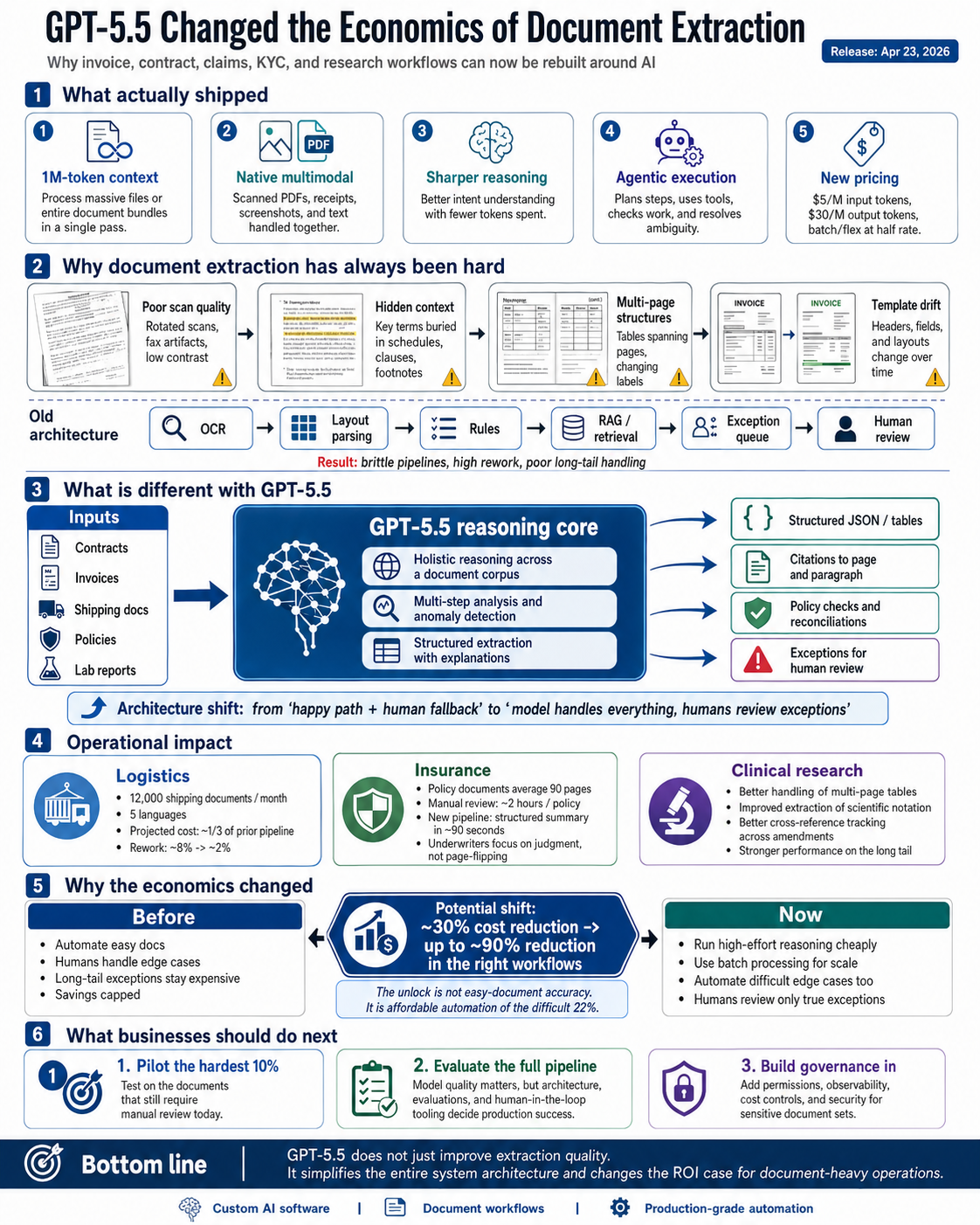

OpenAI released GPT-5.5 (and the more powerful GPT-5.5 Pro variant) on April 23, 2026. The headline from OpenAI is that this is their “smartest and most intuitive to use model yet.” That’s marketing language. Here’s what matters operationally:

- A 1 million token context window in the API. That means you can hand the model a 1,500-page contract bundle, or an entire quarter of supplier invoices, in a single call. No more chunking gymnastics.

- Better intent understanding with fewer tokens. OpenAI is positioning this as a “faster, sharper thinker for fewer tokens” compared to GPT-5.4, meaning the same job gets done with less compute spent on the model thinking out loud.

- Native multimodal handling. Scanned PDFs, photos of receipts, screenshots of legacy ERP screens, it processes text and images in one pass, without you stitching together a separate OCR pipeline.

- Multi-step “agentic” execution. You can hand it a messy task, “go through this folder, extract every line item, reconcile against last quarter’s, and flag anomalies”, and trust it to plan, use tools, check its work, and keep going when something is ambiguous.

Pricing is $5 per million input tokens and $30 per million output tokens for the standard model, with batch and flex pricing at half rate. That last part matters more than people are giving it credit for. I’ll come back to it.

Why Document Extraction Has Always Been The Hardest “Easy” Problem

Before we talk about the upgrade, let me say plainly what nearly every operations leader I work with has struggled to articulate: document extraction is brutal because real documents are not what software vendors pretend they are.

The pitch decks always show you a clean invoice. Tidy fields. Consistent layout. Reality is:

- A scan of a fax of a printout of a PDF, slightly rotated.

- A 47-page master service agreement where the payment terms live in Schedule C, paragraph 14, but Schedule C references “the Effective Date” defined on page 2.

- A clinical trial document where the dosage table spans three pages and uses footnote markers that change meaning across sections.

- A government statistical publication where the column headers don’t match the data, because someone added a row in 2019 and never fixed the template.

For years, the answer was either (a) hire a vendor whose product handles 70% of cases and breaks unacceptably on the long tail, or (b) hire a team in low-cost geography to type things by hand. Both options have a hard ceiling on what they can deliver.

Earlier generative models pushed that ceiling up, GPT-5.4, for example, was already reaching around 78% extraction accuracy on enterprise documents in published evaluations. Good, but not “fire the contractors” good. The remaining 22% is where compliance breaks, audit findings happen, and your CFO loses sleep.

What’s Actually Different With 5.5

Three shifts matter for businesses dealing with document-heavy operations.

- Reasoning across an entire document corpus, not just within one file.

The 1M token context window sounds like a spec sheet item, but it changes the architecture you should build. Previously, if you wanted to extract data from a 200-page contract and cross-reference it against 50 prior contracts to flag deviations, you had to build a retrieval pipeline, vectorize everything, embed, query, hope, pray. Now? You can feed all of it in one shot and ask the model to reason holistically. For the right kind of task, you skip three weeks of RAG infrastructure work.

- Genuine multi-step autonomy on messy inputs.

The pattern I’m seeing in production, and that early enterprise partners on Databricks have reported on benchmarks like OfficeQA, which tests document-heavy multi-step analytical tasks against real Treasury Bulletins, is that 5.5 will plan its own approach. You give it: “Here are 400 supplier invoices for Q1. Identify any that violate our payment terms policy, flag duplicates, and produce a remediation list.” It doesn’t need you to script each step. It will go retrieve the relevant policy clause, parse each invoice, build the comparison, and check itself. The number of brittle if-statements you used to write to glue this together collapses.

- The economics finally favor automation at the long tail.

This is the part most analysts miss. The big unlock isn’t accuracy on easy documents, we already had that. It’s that with batch pricing at half the standard rate, processing your difficult, edge-case 22% with high-effort reasoning is now cheap enough to do unconditionally. You stop building “happy path + human-in-the-loop fallback” architectures and start building “model handles everything, humans review exceptions” architectures. That sounds like a small distinction. It’s the difference between a 30% cost reduction and a 90% cost reduction.

What This Looks Like For Real Businesses

Let me ground this in the kinds of engagements I’ve been having lately.

A logistics client processes around 12,000 shipping documents a month, bills of lading, customs forms, certificates of origin, in five languages. Their previous setup was a hybrid OCR-plus-rules-plus-team-of-eight pipeline that cost them roughly six figures annually and produced about 8% rework. With a 5.5-based pipeline I’m scoping for them now, the projected cost is roughly a third of that, with rework expected to drop closer to 2%, and, this is the underrated part, the system can finally explain why it extracted what it did, which their compliance team has been begging for.

An insurance brokerage is dealing with carrier policy documents, each averaging 90 pages, with material terms scattered throughout. Their underwriters were spending two hours per policy on manual review. The new pipeline produces a structured summary with citations to specific page-and-paragraph in roughly 90 seconds. Underwriters are spending their time on the judgment calls now, not the page-flipping.

A clinical research organization deals with study protocols, regulatory filings, and adverse event reports. Their old system flagged about 60% of relevant data points correctly. Initial testing with 5.5 is showing meaningful improvement on exactly the document categories where they were weakest, multi-page tables, scientific notation, cross-references between protocol amendments.

In each case, what changed isn’t that “AI got smarter.” What changed is that the model is now reliable enough at the long tail, cheap enough at scale, and capable enough at multi-step reasoning that you can rebuild the actual workflow around it, not bolt it onto the side of an existing process.

What You Should Actually Do About It

Three honest pieces of advice.

Don’t rip out your existing extraction pipeline this quarter. Whatever you have running, it’s running. Production stability matters. But do start a parallel pilot on your hardest 10% of documents, the ones that currently require human review. That’s where 5.5’s gains are most visible and where the ROI case writes itself.

Be skeptical of “GPT-5.5-powered” badges. Vendors are already updating their landing pages. The model is one ingredient. The pipeline architecture, the evaluation harness, the human-in-the-loop tooling, the security posture, that’s where the actual difference between a working system and a demo lives. Anyone selling you the model as the product is selling you the easy part.

Take the security and governance work seriously. The 1M context window means you might be sending genuinely sensitive data, entire contract portfolios, full patient records, proprietary financials, through a third-party API. Enterprise platforms like Databricks now route GPT-5.5 calls through governed gateways with permissions, cost controls, and observability. If you’re going direct to the API, you need an equivalent layer. This isn’t optional.

The Bigger Picture

I started my company because I believed that custom software, built carefully around a specific business’s actual workflow, would always beat generic SaaS for companies with real complexity. That thesis hasn’t changed. What’s changed is the building blocks.

A year ago, building a serious document-extraction system meant orchestrating five or six models, three vendor APIs, a retrieval layer, an OCR fallback, and a small army of prompt engineers. Today, with GPT-5.5 as the reasoning core, the same system is dramatically simpler to build, dramatically cheaper to run, and dramatically better at the cases that used to require a human.

That’s not hype. That’s the actual job getting easier.

If your business is still treating document extraction as an inevitable cost of doing business, a tax you pay to be in your industry, it’s worth taking another look. The math has changed. Your competitors are noticing.